As the pandemic rages through India (and different parts of the world) we’ve all been stuck at home for months on end. Everyone’s skeptical, the mood ain’t great and it’s hard to remain cheerful. Wouldn’t it be great to take a break from 2020 and reflect back on the times when meeting people, attending conferences, travelling and generally being in a happy state of mind were a thing? I decided to do just that and pen another year-in-review post. Yes, it’s 10 full months since the year ended but we posted a delayed review last year as well and now we can call ourselves trendsetters.

2019 was a year of consolidation and gradually stepping forward. We undertook twelve different engagements of varying sizes and evolved throughout the year. Let’s look at some important developments, milestones and highlights for Miranj from 2019.

Helping Organisations Think and Strategise

We started out as a studio with two key skills — building websites, and finishing them on time and within budget. The latter skill is better known as project management.

The first step in any project was understanding the requirements. The process relied heavily on clients knowing what they want and being able to articulate that. We often got frustrated if clients were vague about their requirements because we considered it a pre-requisite for our work. But as we gathered more experience our thoughts evolved from “clients don’t know what they want” to “we need to help them understand their needs”. Back in 2016, this translated into our very first project discovery workshop. The process was rudimentary, relying mostly on frameworks from other workshops we’d participated in previously. But we stuck to it and started conducting workshops before every sizeable project.

Over the years these workshops have undergone several rounds of iteration and have achieved a clear structure — understanding the problem-space, defining the solution-space and finally discussing the execution and project management. We usually conduct the workshop at the client location to ensure representation from as many departments as possible. Then we return to our base for the execution armed with clarity on the goals, priorities and the overall scope of the project. It makes our work more focussed, smoothens the execution process and helps achieve greater impact.

Discovery and Strategy Workshops have become an essential component of every mid-to-large size project we undertake, and in 2019 we started offering them as a stand-alone service. The workshops not only help us in acquiring a deep understanding of an organisation’s needs but also helps the clients make better strategic decisions about their website, and at times, even their business. Last year we conducted 4 such workshops. In the future, we hope to offer this to organisations who are thinking about transforming their website but need a good decision framework. If you’re aware of any such organisation who can benefit from such an exercise, email hidden; JavaScript is required.

Dominated by Non-Profit Engagements

At Miranj we’re mindful about the projects we take on. Very early in our journey, we wrote about our sweet spot. Gradually we also articulated our purpose. We need to keep reflecting on our experiences and defining what type of work we find meaningful. From the early days we found fulfilment in engaging with folks that work for the society — organisations in the development sector, projects that empower people, individuals who fight for rights, and so on. Gradually we also started working in the academic sector. Over the past 9 years, we have worked with many CSOs, educational institutions and other not-for-profit institutions.

We’ve always had an affinity for the not-for-profit sector. Among all the enquiries that land on our plate these are the ones that make us most excited and give us a strong sense of fulfilment once we’ve completed the project.

2019 was a special year. We hit a new milestone. Our revenue from not-for-profit projects touched nearly 2x our commercial revenue. It’s hard to predict if we’ll be able to repeat this feat in the coming years but we definitely hope we do.

Surprising Cross-Geography Collaborations

At Miranj we spend far more time discussing our craft and honing our skills than thinking about business. We’ve mostly been discovered through word of mouth referrals. Consequently, our projects have been predominantly based in India. But unlike previous years, in 2019 we ended up engaging with clients and collaborators from 6 different countries. This is a significant number for a tiny team like ours. It’s very reassuring to know that somehow people from different parts of the world have managed to stumble upon us. One such email had dropped in from Paul Manem, a web designer-developer based in Cambodia, in late 2018. We were overjoyed to meet someone like him who shared so many of our work ideologies. Last year we ended up collaborating with him on a project and it was a very pleasurable experience.

Massive Technical Upgrades

Sizeable software is rarely rewritten from ground-up. But when it gets rewritten it’s usually a big leap forward on one hand and disruptive on the other. In 2018 Craft CMS made one such jump with Craft 3. Three years and three months in the making, Craft 3 was a completely re-architected piece of software. Big changes, although for the better, are disruptive. All Craft plugins had to be rewritten, and website upgrades needed significant work. In 2019, we undertook the highly technical upgrade of two of our largest websites (IndiaBioscience and Guiding Tech) from Craft 2 to Craft 3. The upgrade to Craft 3 also allowed us to refactor some underlying code and in turn achieve better performance, stability, SEO and authoring experience.

The upgrade to Craft 3 helped us grow a lot in the technical direction. We learned a new PHP framework (Yii2), adopted the Composer package manager, deployed a SiteDiff tool and upgraded all our Craft plugins.

Working with a Big Name

One of our highlights from last year was our work with Azim Premji University. We worked on a new website for the university with the help of two fellow collaborators Shalini Sekhar and Kavya Murthy. Working with the university was a surprisingly enjoyable experience but unfortunately, the COVID-19 pandemic resulted in a significant delay on the launch schedule. We are hopeful that it will happen soon. Later in the year we also went on to work with the Azim Premji Philanthropic Initiatives to help them strategise their future web presence.

We feel quite lucky to have had an opportunity to work with the socially conscious institutions set up by Mr Azim Premji who’s widely regarded as the most generous giver in corporate India. Small teams rarely get to work with such widely recognisable names and hopefully, in the coming years, we’ll get to work with more such reputed institutions.

Dot All 2019

Dot All is an annual international conference on Craft CMS and modern web development. We’ve been participating in this conference every year since its very first edition in 2017. In 2019 Dot All took place in Montréal, Canada between September 18th and 20th. It was an exciting opportunity to hop on a couple of long flights and meet the amazing Craft Community. And of course, visiting a new country.

Image Courtesy Dot All

For Miranj it was a special one since Prateek’s proposal titled “Fortifying Craft for High Traffic” was accepted by the Dot All organisers. Prateek’s talk covered our learnings from the Guiding Tech website i.e. how we’ve optimised a low-powered server to handle millions of visitors each month by strategically caching the website at two places — Nginx (using FastCGI Micro-Caching) and flag-based template caches in Craft CMS. The talk received great reviews from the participants, and generated instant interest among the performance lovers in the community. It was a validation of how much we’ve improved over the years.

Image Courtesy Dot All

Just like every year, the conference was an opportunity to catch up with old friends and make new ones. We grabbed fresh beers, shared dumplings and also went on a walking tour around the old city of Montréal.

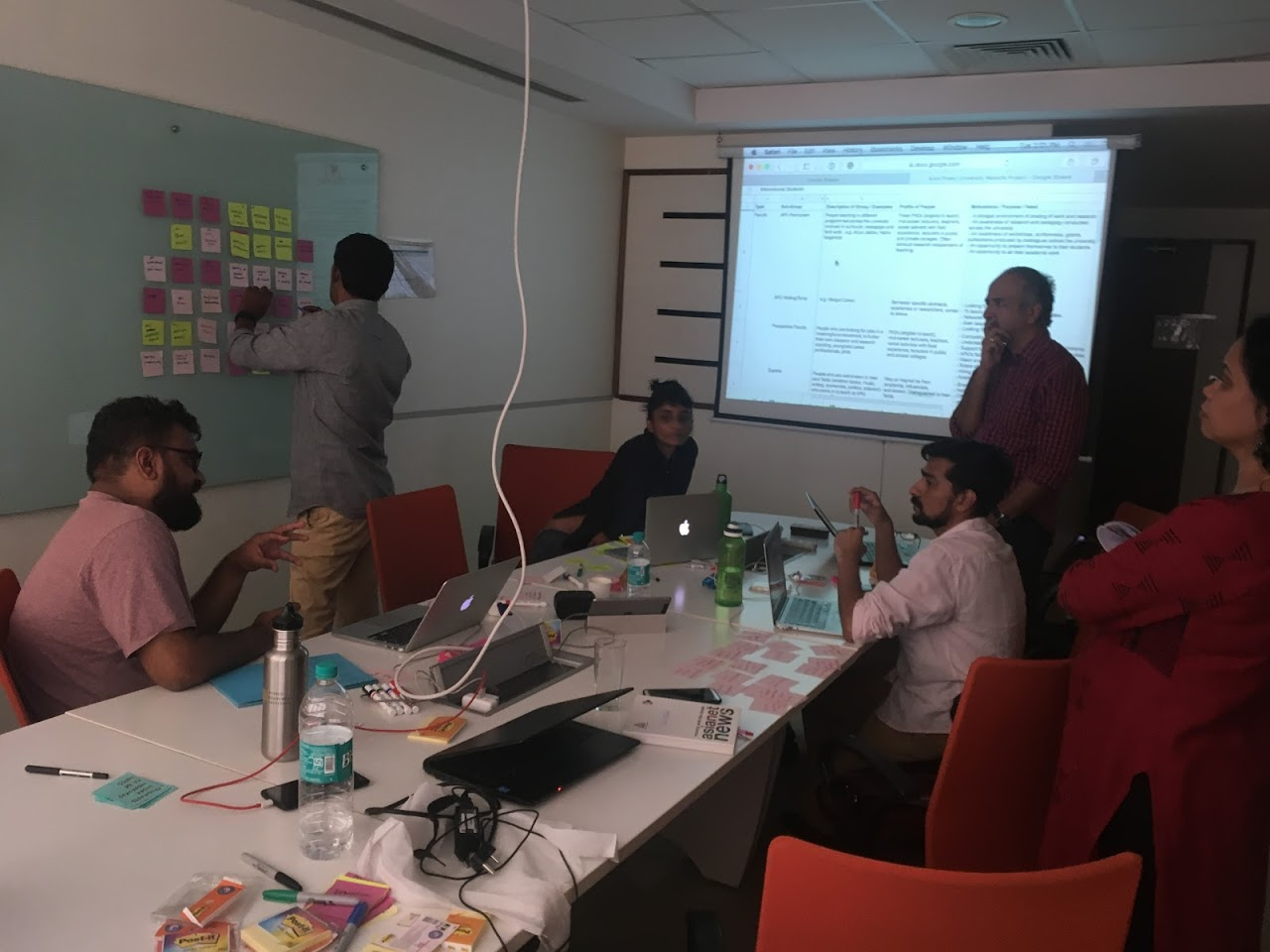

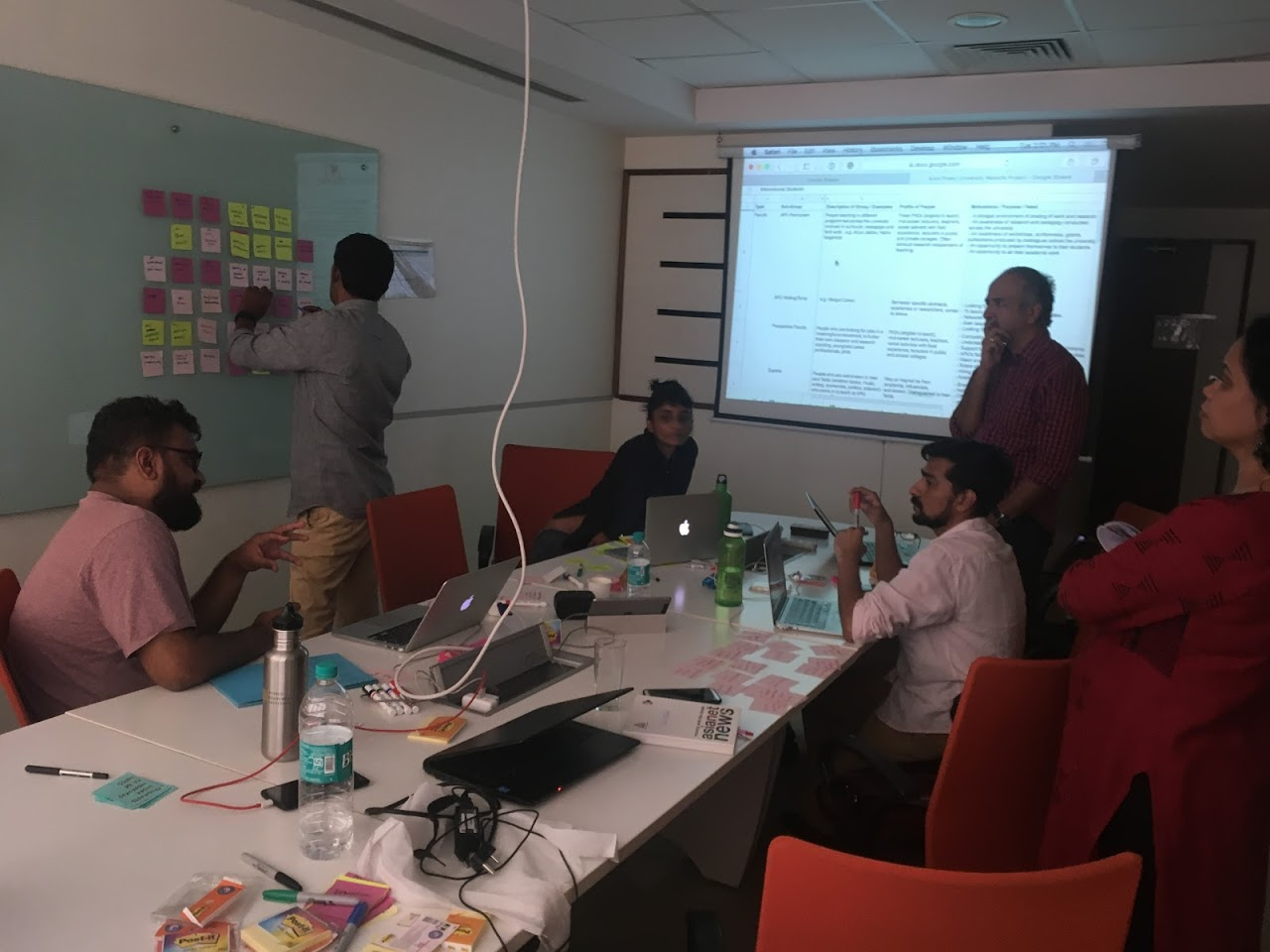

World IA Day

World Information Architecture Day (WIAD) is a one-day annual celebration to evangelise the practise of information architecture and is held in dozens of locations across the world. Souvik has been the local organiser for New Delhi since 2018, and together with the help of Abhishek and Namita organised the 2019 edition on 23rd Feb. This was the second year of Miranj supporting WIAD in New Delhi. The event brought together people from various backgrounds — designers, lawyers and architects — to discuss various topics on Information Architecture.

DesignxDesign Exposé 41

DesignxDesign, an initiative by Alliance Française de Delhi and Studio IF, has been nurturing the design and creative community since 2010. Through exposés, round tables, exhibitions and tête-à-têtes the community facilitates conversations among professionals and educators in various fields of design — Architecture/Habitat, Graphic/Communication, Product/Industrial and Apparel/Textile. We were invited to present our work at DesignxDesign Exposé 41 alongside RLDA – an architecture studio based in New Delhi. Incidentally, we were also the first New Media/Digital Design studio to have taken the stage at a DesignxDesign Exposé. The event took place at Alliance Française de Delhi on November 28, 2019.

Image Courtesy DesignxDesign on Facebook

This was the first time both Prateek and I shared a stage together. We used the opportunity to talk about our philosophy, share how the web is more than just a visual medium and show some of our work spanning several years. You can take a look at our slides on SpeakerDeck. The session was also being broadcasted live on Facebook so there’s a video if you’re interested.

We Grew

Let’s be honest, we suck at growing our team. It took us 8 years and a few hits and misses (more on this below) to find the right candidate. But now that we have, please meet Archit Chandra who joined our team as a web developer.

Archit is a self-taught web developer who is keen to learn different aspects of web technologies. Before joining Miranj he ran an independent webshop called GreyThink Labs where he created numerous company websites and online news publications. His experience was a great fit for Miranj — not only did he actively work on CMS-based websites but he also had a good understanding of how small creative businesses run. From the get-go, Archit has brought in some fresh opinions in our studio and has always been challenging our ways of doing things. He’s an avid listener of podcasts, loves reading books and is a passionate follower of Chelsea FC.

Setbacks

Reflections are incomplete without acknowledging the low-points. While many of them feel part-and-parcel of running a business a couple of them stand etched in our memories.

The first one was a bumpy project where the communication with a collaborator had broken down. It was a frustrating experience — misaligned expectations, unexpected turns, client expressing surprise, uncomfortable meetings and more. We’d learned a lot from a similar situation many years back where the communication between the client and a collaborator had broken down. But this time around we didn’t directly own the client relationship. As a result, we had to negotiate some significant challenges in navigating expectations, steering conversations and managing resentment. Eventually, we were able to take the project to closure but it left behind a sour taste. The entire experience reaffirmed to us the importance of project management. Creative services are likely to find project management unexciting. But laying out a clear plan and process so that each stakeholder understands her/his role and responsibilities can go a long way in averting a fallout. Clearly, there’s so much to learn. If you have any thoughts or experiences on this subject email hidden; JavaScript is required.

The other setback was our first experience of a wrong hire. Miranj has hired only a handful of times and we’re far from mastering the art of hiring (or dealing with bad experiences). It was a repeating cycle of conflict and attempt to resolve issues. Every conversation ended in hope for change but eventually resulted in disappointments. We kept trying for a few months but eventually had to call it off. Letting go of someone is not easy. We’d experienced a lot of uncertainty and guilt a few years back when we had to let go of someone for entirely different reasons. But this time despite knowing that we were making the right decision it still felt bad.

Recap

2019 started slow and breezy, turned into a raging mid-year and came to a calm end. Through the year we evolved our services, learned new tools and techniques, entered new technical partnerships and undertook new challenges. It was a year of growth in every possible sense — in our skills, in our experiences, in our revenue, in our service offerings and even our team size. Feels good to have accomplished so much last year.

2019 in Numbers

- Undertook 12 client projects

- Worked with 6 collaborators

- Delivered 2 talks

- Hosted 1 event

- Plugins: 1 new release, 6 updates

- Worked with/in 6 countries

- Worked with 5 non-profit organisations

- 4 co-workers

- 4 workshops

- 1 new team member

Clearly, our in-review posts are published quite late. If you’re curious about what we’re up to this year you need not wait until mid-2021. Earlier this year we started an occasional newsletter. The next edition will be published shortly and we’ll tell you what’s been cooking in 2020. Do subscribe and expect an update soon.